Advantages:

- Universal and real-time detection across image, video, and audio domains

- Lightweight and highly efficient, operating orders of magnitude faster than existing defenses

- Achieves 98% detection accuracy, significantly outperforming other methods

- Compatible with any Deep Neural Network (DNN) without requiring modifications

Summary:

Deep Neural Networks (DNNs) are widely used in machine learning applications but remain vulnerable to adversarial attacks small, imperceptible input manipulations that cause AI models to make incorrect predictions. Current defense strategies attempt to negate perturbations or use secondary detection models, but these approaches struggle against rapidly evolving attack techniques and often fail to provide real-time protection.

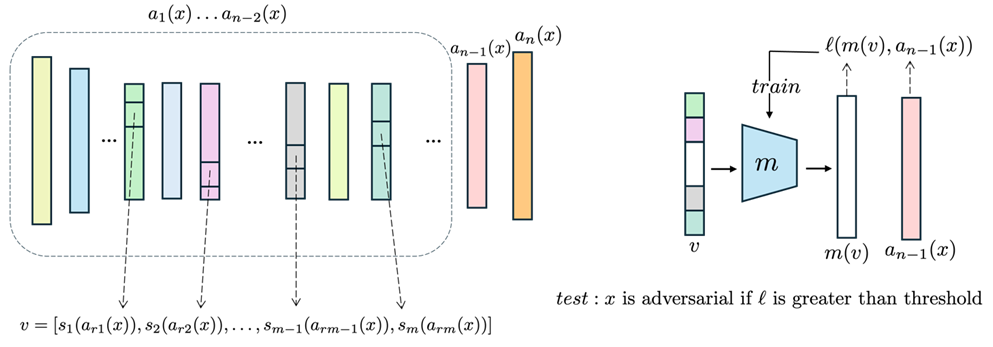

Layer Regression (LR) is a universal, real-time adversarial detection method that identifies attacks by analyzing layer-wise distortions within DNNs. Instead of relying on input or output manipulations, LR uses a multi-layer perceptron (MLP) model to estimate feature vectors from early layers of the network. Higher estimation errors indicate adversarial samples, enabling fast and accurate detection. Tested across 672 attack-dataset-model-defense combinations, LR outperforms existing methods with a 98% detection rate while being orders of magnitude faster, making it ideal for real-time AI security applications.

The image illustrates the Layer Regression (LR) adversarial detection framework, showing the layer selection and slicing operations to form the input vector on the left and training and testing procedures on the right. It highlights how LR detects these distortions by analyzing layer-wise deviations. LR leverages the fact that adversarial samples have a greater impact on the final layers of a Deep Neural Network (DNN) than on the initial layers.

Desired Partnerships:

- License

- Sponsored Research

- Co-Development